7.2.4. Example: Object Detection using SSD

Sample program demonstrating inference using the SSD-ResNet34 trained model on the COCO dataset

Note

The trained model and some source code used in this example have been partially modified or directly sourced from intel / ai-reference-models. All of these components are licensed under the Apache License, Version 2.0.

The COCO dataset is licensed under CC BY 4.0, and we exclusively use images licensed under CC BY 2.0.

Execution Method

The first execution performs the following downloads (subsequent runs will skip these steps).

By default, the download location is /tmp/mlsdk_ssd_inference/.

Python packages

$ cd /opt/pfn/pfcomp/codegen/MLSDK/examples/ssd_inference

$ ./run_ssd_inference.sh /tmp/mlsdk_ssd_inference/coco/val2017/000000363666.jpg --device mncore2:auto

Expected Output

A detection result will be saved in the current working directory by default as a out_mncore_-prefixed file.

Additionally, a out_torch_-prefixed file will also be generated - this is a result from running the same task using PyTorch.

The comparison between them will be performed using the structural similarity index measure (SSIM) algorithm.

Tip

To specify the directory for outputting result files, you can use the --out_img_dir option.

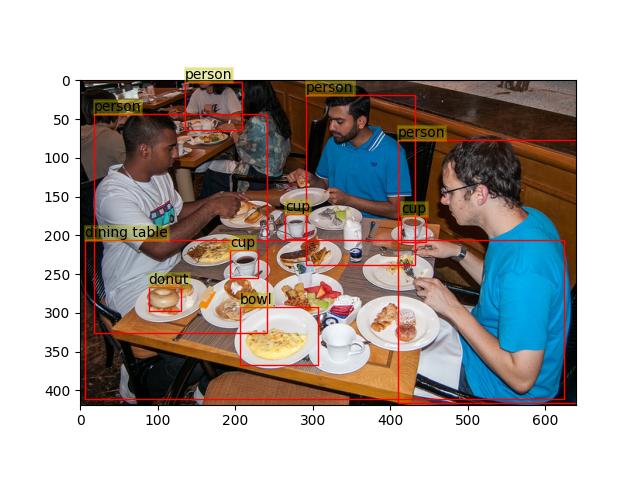

Detection results (

./out_mncore_000000363666.jpg)

Fig. 7.4 Object detection using SSD-ResNet34 on MN-Core 2

Log output (

SSIM scoreis considered acceptable if greater than or equal to 0.99, indicating equivalent inference result to PyTorch)

Drawing detection result => /opt/pfn/pfcomp/codegen/MLSDK/examples/ssd_inference/out_mncore_000000363666.jpg

Drawing detection result => /opt/pfn/pfcomp/codegen/MLSDK/examples/ssd_inference/out_torch_000000363666.jpg

SSIM score: 0.9959848160827895

Scripts

1#! /bin/bash

2

3set -eux -o pipefail

4

5EXAMPLE_NAME="mlsdk_ssd_inference"

6VENV_DIR=${VENV_DIR:-"/tmp/${EXAMPLE_NAME}/venv"}

7EXTERNAL_DIR=${EXTERNAL_DIR:-"/tmp/${EXAMPLE_NAME}/external"}

8COCO_DIR=${COCO_DIR:-"/tmp/${EXAMPLE_NAME}/coco"}

9OUT_DIR=${OUT_DIR:-"/tmp/${EXAMPLE_NAME}/out"}

10

11CURRENT_DIR=$(realpath $(dirname $0))

12CODEGEN_DIR=$(realpath ${CURRENT_DIR}/../../../)

13BUILD_DIR="${CODEGEN_DIR}/build"

14

15### Prepare and source venv/

16

17if [[ ! -d ${VENV_DIR} ]]; then

18 python3 -m venv --system-site-packages ${VENV_DIR}

19 source ${VENV_DIR}/bin/activate

20 pip3 install -r ${CURRENT_DIR}/requirements.txt

21else

22 source ${VENV_DIR}/bin/activate

23fi

24

25### Prepare external/ items

26

27mkdir -p ${EXTERNAL_DIR}

28pushd ${EXTERNAL_DIR}

29

30if [[ ! -d ai-reference-models ]]; then

31 git clone 'https://github.com/intel/ai-reference-models.git' ai-reference-models --depth 1 --branch v3.3

32fi

33if [[ ! -f resnet34-ssd1200.pth ]]; then

34 # For downloading the trained model, please refer to this documentation.

35 # Ref: https://github.com/intel/ai-reference-models/blob/main/models_v2/pytorch/ssd-resnet34/inference/cpu/CONTAINER.md

36 wget --no-check-certificate \

37 'https://docs.google.com/uc?export=download&id=13kWgEItsoxbVKUlkQz4ntjl1IZGk6_5Z' \

38 -O resnet34-ssd1200.pth

39fi

40

41popd

42

43### Prepare coco/ items

44

45mkdir -p ${COCO_DIR}

46pushd ${COCO_DIR}

47

48# download the COCO datasets and annotations

49if [[ ! -d val2017 ]]; then

50 curl -O 'http://images.cocodataset.org/zips/val2017.zip'

51 unzip val2017.zip

52 rm -f val2017.zip

53fi

54if [[ ! -d annotations ]]; then

55 curl -O 'http://images.cocodataset.org/annotations/annotations_trainval2017.zip'

56 unzip annotations_trainval2017.zip

57 rm -f annotations_trainval2017.zip

58fi

59# fetch images and annotations for inference

60if [[ ! -f annotations/fetched_annotations_eval.json ]]; then

61 python3 ${CURRENT_DIR}/coco_preparation.py \

62 -a annotations/instances_val2017.json \

63 -i val2017 \

64 -f annotations/fetched_annotations_eval.json

65fi

66

67popd

68

69### Run ssd_inference.py

70

71source "${BUILD_DIR}/codegen_pythonpath.sh"

72

73# Do not split a small h/w image among L1/L2 blocks.

74export CODEGEN_SPATIAL_SPLIT_THRESHOLD_L2B_L1B=32

75

76PYTHONPATH=${PYTHONPATH}:"${EXTERNAL_DIR}/ai-reference-models/models_v2/pytorch/ssd-resnet34/inference/cpu"

77python3 ${CURRENT_DIR}/ssd_inference.py \

78 --model_path "${EXTERNAL_DIR}/resnet34-ssd1200.pth" \

79 --coco_data_path "${COCO_DIR}/val2017" \

80 --coco_annotation_path "${COCO_DIR}/annotations/fetched_annotations_eval.json" \

81 --out_img_dir "${CURRENT_DIR}" \

82 --outdir "${OUT_DIR}" \

83 --option_json "${CODEGEN_DIR}/preset_options/O1.json" \

84 ${@}

1import argparse

2import os

3from pathlib import Path

4from typing import Mapping, Optional

5

6import cv2

7import matplotlib

8import matplotlib.patches as patches

9import matplotlib.pyplot as plt

10import numpy as np

11import torch

12import torch.utils.data

13from PIL import Image, ImageFile

14from skimage.metrics import structural_similarity as ssim

15

16# isort: off

17

18# MLSDK modules

19from mlsdk import (

20 MNDevice,

21 Context,

22 storage,

23 CacheOptions,

24 TensorLike,

25)

26

27# following modules are from:

28# [ai-reference-models repository](https://github.com/intel/ai-reference-models/tree/main/models_v2/pytorch/ssd-resnet34/inference/cpu) # NOQA: B950

29from infer import dboxes_R34_coco

30from ssd_r34 import SSD_R34

31from utils import COCODetection, Encoder, SSDTransformer

32

33# isort: on

34

35# No GUI output

36matplotlib.use("Agg")

37

38

39# label_num = 81 and strides = [3, 3, 2, 2, 2, 2] are from original source in:

40# https://github.com/intel/ai-reference-models/tree/main/models_v2/pytorch/ssd-resnet34/inference/cpu/infer.py

41def load_model(

42 path: str | os.PathLike,

43 label_num: int = 81,

44 strides: tuple[int, ...] = (3, 3, 2, 2, 2, 2),

45) -> torch.nn.Module:

46 # Create class object

47 model = SSD_R34(label_num, strides=strides)

48 # Load pretrained model's parameters

49 model.load_state_dict(

50 torch.load(path, map_location=lambda storage, loc: storage)["model"]

51 )

52 assert isinstance(model, torch.nn.Module)

53 return model

54

55

56# Load an input image given as a path

57def load_image(

58 img_path: str | os.PathLike,

59 img_size: tuple[int, int] = (1200, 1200),

60) -> tuple[ImageFile.ImageFile, torch.Tensor]:

61 # Resize input img for model

62 orig_img = Image.open(img_path)

63

64 # Convert img to tensor and change shape

65 resized_img = orig_img.resize(img_size)

66 converted_img_np = np.array(resized_img).transpose(

67 2, 0, 1

68 ) # (C,H,W), assuming dtype=uint8

69 converted_img = (

70 torch.from_numpy(converted_img_np).contiguous().unsqueeze(dim=0)

71 ) # (1,C,H,W)

72

73 return orig_img, converted_img

74

75

76# Decode a model's output for an input image

77def decode_outputs(

78 out_locs: torch.Tensor,

79 out_labels: torch.Tensor,

80 encoder: Encoder,

81 criteria: float = 0.50,

82 max_output: int = 200,

83 device: int = 0,

84) -> list[tuple[torch.Tensor, torch.Tensor, torch.Tensor]]:

85 try:

86 decoded_outputs = encoder.decode_batch(

87 out_locs,

88 out_labels,

89 criteria=criteria,

90 max_output=max_output,

91 device=device,

92 )

93 except Exception as e:

94 print(f"Error in decode_outputs: {type(e)} - {e}")

95 return []

96

97 results = []

98 idx = 0

99 for i in range(decoded_outputs[3].size(0)):

100 detection_num = decoded_outputs[3][i].item()

101 idx_range = idx + detection_num

102 results.append(

103 (

104 decoded_outputs[0][idx:idx_range],

105 decoded_outputs[1][idx:idx_range],

106 decoded_outputs[2][idx:idx_range],

107 )

108 )

109 idx += detection_num

110

111 return results

112

113

114# This function is originally from:

115# [ai-reference-models](https://github.com/intel/ai-reference-models/blob/main/models_v2/pytorch/ssd-resnet34/inference/cpu/utils.py) # NOQA: B950

116# Drawing bboxes and labels for detected objects

117def draw_patches( # NOQA: CFQ002

118 img: ImageFile.ImageFile,

119 img_path: Path,

120 bboxes: torch.Tensor,

121 labels: torch.Tensor,

122 order: str = "ltrb",

123 label_map: Mapping[int, str] | None = None,

124 bbox_alpha: float = 1.0,

125 bbox_linewidth: int = 1,

126 label_alpha: float = 0.3,

127 label_linewidth: int = 2,

128 text_size: int = 10,

129) -> None:

130 img_np = np.array(img)

131 labels_np = labels.detach().numpy()

132 bboxes_np = bboxes.detach().numpy()

133

134 # From labels_np (ndarray[int]) to labels_list (list[str])

135 if label_map is not None:

136 labels_list = [

137 label_map.get(int(label_i), "(no label)") for label_i in labels_np

138 ]

139 else:

140 # Use an int value as a label str

141 labels_list = [str(int(label_i)) for label_i in labels_np]

142

143 if order == "ltrb":

144 xmin, ymin, xmax, ymax = (

145 bboxes_np[:, 0],

146 bboxes_np[:, 1],

147 bboxes_np[:, 2],

148 bboxes_np[:, 3],

149 )

150 cx, cy, w, h = (xmin + xmax) / 2, (ymin + ymax) / 2, xmax - xmin, ymax - ymin

151 else:

152 cx, cy, w, h = (

153 bboxes_np[:, 0],

154 bboxes_np[:, 1],

155 bboxes_np[:, 2],

156 bboxes_np[:, 3],

157 )

158

159 htot, wtot, _ = img_np.shape

160 cx *= wtot

161 cy *= htot

162 w *= wtot

163 h *= htot

164

165 plt.imshow(img_np)

166 ax = plt.gca()

167 for cx_i, cy_i, w_i, h_i, label_i in zip(cx, cy, w, h, labels_list):

168 if label_i == "background":

169 continue

170 ax.add_patch(

171 patches.Rectangle(

172 (cx_i - 0.5 * w_i, cy_i - 0.5 * h_i),

173 w_i,

174 h_i,

175 fill=False,

176 color="r",

177 alpha=bbox_alpha,

178 linewidth=bbox_linewidth,

179 )

180 )

181 bbox_props = dict(

182 boxstyle="square,pad=0",

183 fc="y",

184 ec="y",

185 alpha=label_alpha,

186 linewidth=label_linewidth,

187 )

188 ax.text(

189 cx_i - 0.5 * w_i,

190 cy_i - 0.5 * h_i,

191 label_i,

192 ha="left",

193 va="bottom",

194 size=text_size,

195 bbox=bbox_props,

196 )

197

198 plt.savefig(img_path)

199 plt.clf()

200 plt.close()

201

202

203# Fetch results satisfying threshold and draw bounding box on the given input image

204def draw_detection_result( # NOQA: CFQ002

205 out_locs: torch.Tensor,

206 out_labels: torch.Tensor,

207 img: ImageFile.ImageFile,

208 img_path: Path,

209 encoder: Encoder,

210 threshold: float,

211 label_info: dict[int, str],

212) -> None:

213

214 decoded_output = decode_outputs(out_locs, out_labels, encoder)

215 if not decoded_output:

216 print("no objects have been detected")

217 return

218

219 bboxes, labels, scores = decoded_output[0]

220 detection_mask = scores > threshold

221 fetched_bboxes = bboxes[detection_mask]

222 fetched_labels = labels[detection_mask]

223 draw_patches(img, img_path, fetched_bboxes, fetched_labels, label_map=label_info)

224

225

226def calc_ssim(

227 lhs_img_path: Path,

228 rhs_img_path: Path,

229) -> float:

230 lhs_img = cv2.imread(lhs_img_path)

231 rhs_img = cv2.imread(rhs_img_path)

232 assert lhs_img.shape == rhs_img.shape

233

234 # Convert to gray scale (only image structure is necessary for SSIM)

235 lhs_img_gray = cv2.cvtColor(lhs_img, cv2.COLOR_BGR2GRAY)

236 rhs_img_gray = cv2.cvtColor(rhs_img, cv2.COLOR_BGR2GRAY)

237

238 return float(ssim(lhs_img_gray, rhs_img_gray)) # type: ignore[no-untyped-call]

239

240

241def run_infer( # NOQA: CFQ002

242 *,

243 img_path: Path,

244 model_path: Path,

245 coco_data_path: Path,

246 coco_annotation_path: Path,

247 out_img_dir: Path,

248 threshold: float,

249 device_name: str,

250 outdir: str,

251 option_json_path: Optional[Path] = None,

252) -> None:

253 # Create Dataset object

254 IMG_SIZE = [1200, 1200]

255 STRIDES = [3, 3, 2, 2, 2, 2]

256 default_boxes = dboxes_R34_coco(IMG_SIZE, STRIDES)

257 transformer = SSDTransformer(default_boxes, tuple(IMG_SIZE), val=True)

258 coco = COCODetection(coco_data_path, coco_annotation_path, transformer)

259

260 # Create encoder for decode process

261 encoder = Encoder(default_boxes)

262

263 # Load and prepare images

264 orig_img, converted_img = load_image(img_path)

265 infer_input = {"image": converted_img}

266 img_name = img_path.name

267

268 # Create pretrained model object

269 model = load_model(model_path)

270 model.eval()

271

272 # Inference function for Context.compile

273 def infer_fn(sample: dict[str, TensorLike]) -> dict[str, TensorLike]:

274 with torch.no_grad():

275 locs, labels = model(sample["image"].float() / 255.0)

276 return {"locs": locs, "labels": labels}

277

278 device = MNDevice(device_name)

279 context = Context(device)

280 Context.switch_context(context)

281

282 context.registry.register("model", model)

283

284 compile_options = {}

285 if option_json_path is not None:

286 compile_options = {"option_json": str(option_json_path)}

287

288 compiled_infer_fn = context.compile(

289 infer_fn,

290 infer_input,

291 storage.path(outdir),

292 options=compile_options,

293 cache_options=CacheOptions(outdir + "/cache"),

294 )

295

296 out_mncore = compiled_infer_fn(infer_input)

297 out_locs_mncore, out_labels_mncore = (

298 out_mncore["locs"].cpu(),

299 out_mncore["labels"].cpu(),

300 )

301

302 mncore_img_path = out_img_dir / ("out_mncore_" + img_name)

303 print(f"Drawing detection result => {mncore_img_path}")

304 draw_detection_result(

305 out_locs_mncore,

306 out_labels_mncore,

307 orig_img,

308 mncore_img_path,

309 encoder,

310 threshold,

311 label_info=coco.label_info,

312 )

313

314 out_torch = infer_fn(infer_input)

315 out_locs_torch, out_labels_torch = (

316 out_torch["locs"].cpu(),

317 out_torch["labels"].cpu(),

318 )

319

320 torch_img_path = out_img_dir / ("out_torch_" + img_name)

321 print(f"Drawing detection result => {torch_img_path}")

322 draw_detection_result(

323 out_locs_torch,

324 out_labels_torch,

325 orig_img,

326 torch_img_path,

327 encoder,

328 threshold,

329 label_info=coco.label_info,

330 )

331

332 score = calc_ssim(mncore_img_path, torch_img_path)

333 print(f"SSIM score: {score}")

334 assert score > 0.99, "Generated images differ."

335

336

337def main() -> None:

338 parser = argparse.ArgumentParser(

339 description="Run SSD-ResNet34-1200 model for input image"

340 )

341 parser.add_argument("img_path", type=Path, help="Path to input image")

342 parser.add_argument(

343 "--model_path",

344 type=Path,

345 required=True,

346 help="Path to trained SSD model (e.g. resnet34-ssd1200.pth)",

347 )

348 parser.add_argument(

349 "--coco_data_path", type=Path, required=True, help="Path to the COCO dataset"

350 )

351 parser.add_argument(

352 "--coco_annotation_path",

353 type=Path,

354 required=True,

355 help="Path to annotation data (JSON) corresponding to the COCO dataset",

356 )

357 parser.add_argument(

358 "--out_img_dir",

359 type=Path,

360 default=Path("."),

361 help="Path to directory to output detection result images",

362 )

363 parser.add_argument(

364 "--threshold",

365 type=float,

366 default=0.4,

367 help="""

368 Detection threshold (0.0-1.0), a smaller threshold make object detecting

369 more sensitive""",

370 )

371 parser.add_argument("--device", type=str, default="mncore2:auto")

372 parser.add_argument("--outdir", type=str, default="/tmp/mlsdk_ssd_inference/out")

373 parser.add_argument(

374 "--option_json",

375 type=Path,

376 default="/opt/pfn/pfcomp/codegen/preset_options/O1.json",

377 )

378 args = parser.parse_args()

379

380 # Validate arguments

381 assert 0 <= args.threshold and args.threshold <= 1.0

382 assert not args.out_img_dir.is_file(), "Please specify directory path."

383

384 args.out_img_dir.mkdir(parents=True, exist_ok=True)

385

386 run_infer(

387 img_path=args.img_path,

388 model_path=args.model_path,

389 coco_data_path=args.coco_data_path,

390 coco_annotation_path=args.coco_annotation_path,

391 out_img_dir=args.out_img_dir,

392 threshold=args.threshold,

393 device_name=args.device,

394 outdir=args.outdir,

395 option_json_path=args.option_json,

396 )

397

398

399if __name__ == "__main__":

400 main()

1matplotlib

2pycocotools

3defusedxml

1import argparse

2import json

3import os

4from typing import Any

5

6

7def fetch_imgs_and_ids(

8 json_obj: dict[str, Any], license_id_list: list[int]

9) -> tuple[list[list[dict[str, int | str]]], list[int]]:

10 img_list = []

11 id_list = []

12 for i in json_obj["images"]:

13 if i["license"] in license_id_list:

14 img_list.append(i)

15 id_list.append(i["id"])

16

17 return img_list, id_list

18

19

20def fetch_annotations(json_obj: dict[str, Any], id_list: list[int]) -> list[Any]:

21 ann_list = []

22 for i in json_obj["annotations"]:

23 if i["image_id"] in id_list:

24 ann_list.append(i)

25

26 return ann_list

27

28

29def remove_wasted_imgs(

30 json_obj: dict[str, Any], fetched_dict: dict[str, Any], img_dir: str | os.PathLike

31) -> None:

32 org_file_names = set([i["file_name"] for i in json_obj["images"]])

33 fetched_file_name = set([i["file_name"] for i in fetched_dict["images"]])

34 diff_set = org_file_names - fetched_file_name

35 for i in diff_set:

36 wasted_img_path = os.path.join(img_dir, i)

37 if os.path.exists(wasted_img_path):

38 os.remove(wasted_img_path)

39

40

41def main() -> None:

42 parser = argparse.ArgumentParser()

43 parser.add_argument(

44 "-a",

45 "--annotation_file",

46 type=str,

47 default="./coco/annotations/instances_val2017.json",

48 )

49 parser.add_argument("-i", "--images_dir", type=str, default="./coco/val2017/")

50 parser.add_argument(

51 "-f",

52 "--fetched_annotation_file",

53 type=str,

54 default="./coco/annotations/fetched_annotations.json",

55 )

56 args = parser.parse_args()

57

58 # fetch images' infomation with no license problems

59 json_obj = None

60 with open(args.annotation_file, mode="r") as f:

61 json_obj = json.load(f)

62 license_id_list = [4] # 4: CC-BY 2.0

63

64 img_list, id_list = fetch_imgs_and_ids(json_obj, license_id_list)

65 ann_list = fetch_annotations(json_obj, id_list)

66 fetched_dict = (

67 {"info": json_obj["info"]}

68 | {"licenses": json_obj["licenses"]}

69 | {"images": img_list}

70 | {"annotations": ann_list}

71 | {"categories": json_obj["categories"]}

72 )

73

74 # dump fetched infomations

75 with open(args.fetched_annotation_file, mode="w") as f:

76 json.dump(fetched_dict, f)

77

78 # remove files unused in inference

79 remove_wasted_imgs(json_obj, fetched_dict, args.images_dir)

80

81

82if __name__ == "__main__":

83 main()